Getting Started with Logs

Overview

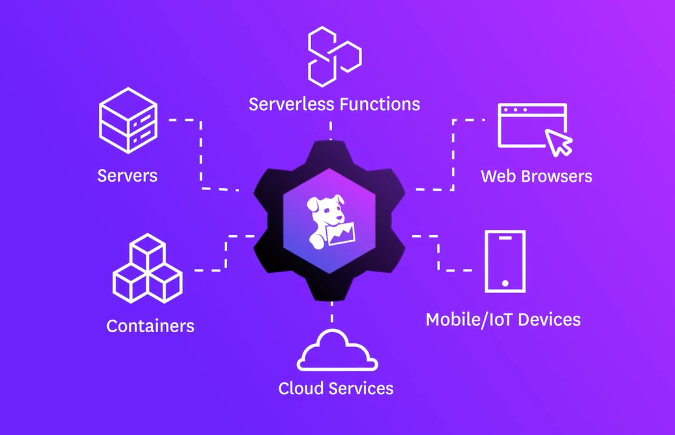

Use Datadog Log Management, also called logs, to collect logs across multiple logging sources, such as your server, container, cloud environment, application, or existing log processors and forwarders. With conventional logging, you have to choose which logs to analyze and retain to maintain cost-efficiency. With Datadog Logging without Limits*, you can collect, process, archive, explore, and monitor your logs without logging limits.

This page shows you how to get started with Log Management in Datadog. If you haven’t already, create a Datadog account.

Configure a logging source

With Log Management, you can analyze and explore data in the Log Explorer, connect Tracing and Metrics to correlate valuable data across Datadog, and use ingested logs for Datadog Cloud SIEM. The lifecycle of a log within Datadog begins at ingestion from a logging source.

Server

There are several integrations available to forward logs from your server to Datadog. Integrations use a log configuration block in their conf.yaml file, which is available in the conf.d/ folder at the root of your Agent’s configuration directory, to forward logs to Datadog from your server.

logs:

- type: file

path: /path/to/your/integration/access.log

source: integration_name

service: integration_name

sourcecategory: http_web_access

To begin collecting logs from a server:

If you haven’t already, install the Datadog Agent based on your platform.

Note: Log collection requires Datadog Agent v6+.

Collecting logs is not enabled by default in the Datadog Agent. To enable log collection, set

logs_enabledtotruein yourdatadog.yamlfile.datadog.yaml

## @param logs_enabled - boolean - optional - default: false ## @env DD_LOGS_ENABLED - boolean - optional - default: false ## Enable Datadog Agent log collection by setting logs_enabled to true. logs_enabled: false ## @param logs_config - custom object - optional ## Enter specific configurations for your Log collection. ## Uncomment this parameter and the one below to enable them. ## See https://docs.datadoghq.com/agent/logs/ logs_config: ## @param container_collect_all - boolean - optional - default: false ## @env DD_LOGS_CONFIG_CONTAINER_COLLECT_ALL - boolean - optional - default: false ## Enable container log collection for all the containers (see ac_exclude to filter out containers) container_collect_all: false ## @param logs_dd_url - string - optional ## @env DD_LOGS_CONFIG_DD_URL - string - optional ## Define the endpoint and port to hit when using a proxy for logs. The logs are forwarded in TCP ## therefore the proxy must be able to handle TCP connections. logs_dd_url: <ENDPOINT>:<PORT> ## @param logs_no_ssl - boolean - optional - default: false ## @env DD_LOGS_CONFIG_LOGS_NO_SSL - optional - default: false ## Disable the SSL encryption. This parameter should only be used when logs are ## forwarded locally to a proxy. It is highly recommended to then handle the SSL encryption ## on the proxy side. logs_no_ssl: false ## @param processing_rules - list of custom objects - optional ## @env DD_LOGS_CONFIG_PROCESSING_RULES - list of custom objects - optional ## Global processing rules that are applied to all logs. The available rules are ## "exclude_at_match", "include_at_match" and "mask_sequences". More information in Datadog documentation: ## https://docs.datadoghq.com/agent/logs/advanced_log_collection/#global-processing-rules processing_rules: - type: <RULE_TYPE> name: <RULE_NAME> pattern: <RULE_PATTERN> ## @param force_use_http - boolean - optional - default: false ## @env DD_LOGS_CONFIG_FORCE_USE_HTTP - boolean - optional - default: false ## By default, the Agent sends logs in HTTPS batches to port 443 if HTTPS connectivity can ## be established at Agent startup, and falls back to TCP otherwise. Set this parameter to `true` to ## always send logs with HTTPS (recommended). ## Warning: force_use_http means HTTP over TCP, not HTTP over HTTPS. Please use logs_no_ssl for HTTP over HTTPS. force_use_http: true ## @param force_use_tcp - boolean - optional - default: false ## @env DD_LOGS_CONFIG_FORCE_USE_TCP - boolean - optional - default: false ## By default, logs are sent through HTTPS if possible, set this parameter ## to `true` to always send logs via TCP. If `force_use_http` is set to `true`, this parameter ## is ignored. force_use_tcp: true ## @param use_compression - boolean - optional - default: true ## @env DD_LOGS_CONFIG_USE_COMPRESSION - boolean - optional - default: true ## This parameter is available when sending logs with HTTPS. If enabled, the Agent ## compresses logs before sending them. use_compression: true ## @param compression_level - integer - optional - default: 6 ## @env DD_LOGS_CONFIG_COMPRESSION_LEVEL - boolean - optional - default: false ## The compression_level parameter accepts values from 0 (no compression) ## to 9 (maximum compression but higher resource usage). Only takes effect if ## `use_compression` is set to `true`. compression_level: 6 ## @param batch_wait - integer - optional - default: 5 ## @env DD_LOGS_CONFIG_BATCH_WAIT - integer - optional - default: 5 ## The maximum time the Datadog Agent waits to fill each batch of logs before sending. batch_wait: 5 ## @param open_files_limit - integer - optional - default: 500 ## @env DD_LOGS_CONFIG_OPEN_FILES_LIMIT - integer - optional - default: 500 ## The maximum number of files that can be tailed in parallel. ## Note: the default for Mac OS is 200. The default for ## all other systems is 500. open_files_limit: 500 ## @param file_wildcard_selection_mode - string - optional - default: `by_name` ## @env DD_LOGS_CONFIG_FILE_WILDCARD_SELECTION_MODE - string - optional - default: `by_name` ## The strategy used to prioritize wildcard matches if they exceed the open file limit. ## ## Choices are `by_name` and `by_modification_time`. ## ## `by_name` means that each log source is considered and the matching files are ordered ## in reverse name order. While there are less than `logs_config.open_files_limit` files ## being tailed, this process repeats, collecting from each configured source. ## ## `by_modification_time` takes all log sources and first adds any log sources that ## point to a specific file. Next, it finds matches for all wildcard sources. ## This resulting list is ordered by which files have been most recently modified ## and the top `logs_config.open_files_limit` most recently modified files are ## chosen for tailing. ## ## WARNING: `by_modification_time` is less performant than `by_name` and will trigger ## more disk I/O at the configured wildcard log paths. file_wildcard_selection_mode: by_name ## @param max_message_size_bytes - integer - optional - default: 256000 ## @env DD_LOGS_CONFIG_MAX_MESSAGE_SIZE_BYTES - integer - optional - default : 256000 ## The maximum size of single log message in bytes. If maxMessageSizeBytes exceeds ## the documented API limit of 1MB - any payloads larger than 1MB will be dropped by the intake. https://docs.datadoghq.com/api/latest/logs/ max_message_size_bytes: 256000Restart the Datadog Agent.

Follow the integration activation steps or the custom files log collection steps on the Datadog site.

Note: If you’re collecting logs from custom files and need examples for tail files, TCP/UDP, journald, or Windows Events, see Custom log collection.

Container

As of Datadog Agent v6, the Agent can collect logs from containers. Each containerization service has specific configuration instructions based where the Agent is deployed or run, or how logs are routed.

For example, Docker has two different types of Agent installation available: on your host, where the Agent is external to the Docker environment, or deploying a containerized version of the Agent in your Docker environment.

Kubernetes requires that the Datadog Agent run in your Kubernetes cluster, and log collection can be configured using a DaemonSet spec, Helm chart, or with the Datadog Operator.

To begin collecting logs from a container service, follow the in-app instructions.

Cloud

You can forward logs from multiple cloud providers, such as AWS, Azure, and Google Cloud, to Datadog. Each cloud provider has its own set of configuration instructions.

For example, AWS service logs are usually stored in S3 buckets or CloudWatch Log groups. You can subscribe to these logs and forward them to an Amazon Kinesis stream to then forward them to one or multiple destinations. Datadog is one of the default destinations for Amazon Kinesis Delivery streams.

To begin collecting logs from a cloud service, follow the in-app instructions.

Client

Datadog permits log collection from clients through SDKs or libraries. For example, use the datadog-logs SDK to send logs to Datadog from JavaScript clients.

To begin collecting logs from a client, follow the in-app instructions.

Other

If you’re using existing logging services or utilities such as rsyslog, Fluentd, or Logstash, Datadog offers plugins and log forwarding options.

If you don’t see your integration, you can type it in the other integrations box and get notifications for when the integration is available.

To begin collecting logs from a cloud service, follow the in-app instructions.

Explore your logs

Once a logging source is configured, your logs are available in the Log Explorer. This is where you can filter, aggregate, and visualize your logs.

For example, if you have logs flowing in from a service that you wish to examine further, filter by service. You can further filter by status, such as ERROR, and select Aggregate by Patterns to see which part of your service is logging the most errors.

Aggregate your logs by Field of Source and switch to the Top List visualization option to see your top logging services. Select a source, such as error, and select View Logs from the dropdown menu. The side panel populates logs based on error, so you quickly see which host and services require attention.

What’s next?

Once a logging source is configured, and your logs are available in the Log Explorer, you can begin to explore a few other areas of log management.

Log configuration

- Set attributes and aliasing to unify your logs environment.

- Control how your logs are processed with pipelines and processors.

- As Logging without Limits* decouples log ingestion and indexing, you can configure your logs by choosing which to index, retain, or archive.

Log correlation

- Connect logs and traces to exact logs associated with a specific

env,service,orversion. - If you’re already using metrics in Datadog, you can correlate logs and metrics to gain context of an issue.

No comments:

Post a Comment